Following Australia, Three of the Five Eyes Consider Online Safety Legislation

U.S. government asks for feedback on how to tighten federal cybersecurity; Court rules AI cannot be an inventor; Hong Kong government issues ethical AIM guidelines

Photo by zhenzhong liu on Unsplash

Over the summer when the Wavelength was a lighter product, Australia enacted the “Online Safety Bill 2021” and the “Online Safety (Transitional Provisions and Consequential Amendments) Bill 2021” (see here for more detail and analysis of the bills as introduced.) It appears that Australia was the first among the so-called Five Eyes nations to seek to regulate the online world for harm. Three of the four other Five Eyes nations are in various states in legislating.

However, the United States (U.S.) Congress seems deadlocked on many of the issues presented by online harm and is, in any event, bounded by the First Amendment which limits the extent of how the U.S. government may regulate speech. Of course, the First Amendment is not limitless, and the Supreme Court of the United States has allowed regulation of some speech in some ways, notably in the famous case from the First World War I in which Justice Oliver Wendell Holmes formulated the yelling fire in a crowded theater scenario. Nonetheless, U.S. policy proposals have looked to change the legal protection platforms like Facebook and Twitter enjoy for the material others post on their platforms and for moderating, editing, and taking down some content (i.e., 47 USC 230 more popularly known as Section 230.) And so, it appears very unlikely the U.S. would choose to regulate online harm and ills in the same its “cousins” are looking to do.

I think some general comments about the proposed bills is warranted. London, Ottawa, and Wellington are struggling with many of the same issues. How does one define material or speech as harmful? According to whom? Anti-Semitic content isn’t welcome in my home, but it may be in others. Also, the potential extra-territorial reach of some of these laws pose legal and practical problems. Let’s say Canada succeeds in enacting a bill requiring the Twitters of the world to take down harmful material (according to Canada’s definition), does this apply throughout the world? Or just in Canada? Moreover, what responsibilities would platforms have to monitor access to material that is legal in the U.S. but not in Canada?

The first stop on the tour is the United Kingdom (UK). A committee of the Parliament is beginning public and private hearings on its draft “Online Safety Bill” (see here for more detail and analysis on the bill as introduced in May 2021.) Here’s the beginning of what I wrote then on the bill:

§ The UK’s Department for Digital, Culture, Media & Sport (DCMS) published its long-awaited online harms bill that sets out the framework by which the UK proposes to regulate harmful and illegal online content. The UK follows Australia and the European Union in proposing legislation to regulate the online world. The Australian Parliament is currently considering the “Online Safety Bill 2021” and the “Online Safety (Transitional Provisions and Consequential Amendments) Bill 2021” (see here for more detail and analysis.) The European Commission (EC) rolled out its The Digital Services Act in December 2020 and is currently negotiating a final bill with other EU stakeholders (see here for more detail and analysis.) And, of course, in the United States (U.S.), there have been calls from both political parties and many stakeholders to revise 47 U.S.C. 230 (aka Section 230), the liability shield many technology companies have to protect them from litigation arising from content they allow others to post. However, to date, no such legislation has advanced beyond mere introduction.

§ The British bill kicks a lot of details into the future where the regulator and government will have to sort key parts of the law. And so, implementation will prove crucial and likely another front where online platforms can make their cases.

The Joint Select Committee started public hearings last week on 9 September and heard from three panels of witnesses. Moreover, the committee has received extensive written input including from the Department for Digital, Culture, Media and Sport and the Home Office that laid out the government’s goals:

The UK’s bill has shades of bills and talking points Republicans have been flogging in Congress. The largest platforms will need to make clearer their rules and procedures for taking down “controversial” material. And like much of London’s emphasis on the harms the online world poses to children, their well-being and protection are at the forefront of this bill and its rationale.

As noted, the Joint Select Committee held a hearing on 9 September on the bill with these witnesses:

§ Mr Imran Ahmed, CEO and Founder at Center for Countering Digital Hate

§ Sanjay Bhandari, Chair at Kick It Out

§ Edleen John, Director of International Relations, Corporate Affairs and Co-Partner for Equality, Diversity and Inclusion at Football Association

§ Rio Ferdinand, former Manchester United player

§ Danny Stone MBE, Director at Antisemitism Policy Trust

§ Nancy Kelley, Chief Executive at Stonewall

In the unofficial transcript (and Parliament is quite firm that any users of this early transcript stress the fact it is not corrected by MPs, Lords, and witnesses), Center for Countering Digital Hate CEO and Founder Imran Ahmed claimed:

Ahmed is clearly targeting the COVID-19 disinformation platforms have been struggling to manage amid pressure from many governments. Not long ago, U.S. President Joe Biden accused Facebook of killing people through the disinformation on its platform. But then Ahmed also blames the online platforms and the proliferation of disinformation for the 6 January insurrection and the abuse athletes of color experience. Ahmed ascribes profit as the primary reason why this sort of disinformation persists online. However, he had some advice on how to revise the Online Safety Bill.

Ahmed was asked for an assessment of the bill, and he responded:

I will not go through the entire transcript, but the above excerpts serve to explain one dominant perspective on online harms (i.e., misinformation and disinformation) and some of the perceived shortcomings of the bill. It bears note that discontent is filtering through to the media about problems with the bill, and, at least, the media’s attempts to get answers on who will be considered a journalist under the bill and hence exempt from much of the new regime. Naturally, the media is concerned about its place in any online harms bill, but other affected industries are obviously lobbying as furiously as possible to protect their interests, correct problems, and water down enforcement provisions. It will be interesting to see what a revised bill looks like.

Incidentally, the chair of the Joint Select Committee, Damian Collins MP, hosts an excellent podcast, Infotagion, and its June 2021 episode is on the Online Safety Bill as well as its most recent episode. I might be wrong, but there are not many Members of the U.S. Congress with such podcasts.

Moreover, a different committee, the Digital, Culture, Media and Sport Sub-committee on Online Harms and Disinformation, is conducting an inquiry into “Online harms and disinformation,” a matter with obvious overlap. This sub-committee’s deadline for written input came on 3 September 2021, and the sub-committee will likely draft and issue a report sooner rather than later.

Next stop is Ottawa. This summer, the government of Canada introduced its own online harms bill, C-36, and its fate may well hinge on the outcome of its general elections next week. Nonetheless, the current government in Ottawa is accepting comments on the bill until 25 September. To help Canadians and others comment, the government made available:

§ A discussion guide that summarizes and outlines the Government’s overall approach.

§ A technical paper that summarizes the proposed instructions to inform the upcoming legislation.

The Parliament offered this summary of the bill while the Parliamentary Information and Research Service of the Library of Parliament works on a more detailed, and one presumes a more authoritative, summary:

§ On 23 June 2021, the Minister of Justice introduced Bill C-36, An Act to amend the Criminal Code and the Canadian Human Rights Act and to make related amendments to another Act (hate propaganda, hate crimes and hate speech), in the House of Commons and it was given first reading.

§ Bill C-36 amends the Criminal Code to create a recognizance to keep the peace relating to hate propaganda and hate crime and to define “hatred” for the purposes of two hate propaganda offences. It also makes related amendments to the Youth Criminal Justice Act.

§ In addition, it amends the Canadian Human Rights Act to provide that it is a discriminatory practice to communicate or cause to be communicated hate speech by means of the Internet or other means of telecommunication in a context in which the hate speech is likely to foment detestation or vilification of an individual or group of individuals on the basis of a prohibited ground of discrimination. It authorizes the Canadian Human Rights Commission to accept complaints alleging this discriminatory practice and authorizes the Canadian Human Rights Tribunal to adjudicate complaints and order remedies.

In a press release, Ottawa explained its understanding of the online harms wrought by social media use:

§ Individuals and groups use social media platforms to spread hateful messaging. Indigenous Peoples and equity-deserving groups such as racialized individuals, religious minorities, LGBTQ2 individuals and women are disproportionately affected by hate, harassment, and violent rhetoric online. Hate speech harms the individuals targeted, their families, communities, and society at large. And it distorts the free exchange of ideas by discrediting or silencing targeted voices.

§ Social media platforms can be used to spread hate or terrorist propaganda, counsel offline violence, recruit new adherents to extremist groups, and threaten national security, the rule of law and democratic institutions. At their worst, online hate and extremism can incite real-world acts of violence in Canada and anywhere in the world, as was seen on January 29, 2017 at the Centre culturel islamique de Québec, and on March 15, 2019, in Christchurch, New Zealand.

§ Social media platforms are also used to sexually exploit children. Women and girls, predominantly, are victimized through the sharing of intimate images without the consent of the person depicted. These crimes can inflict grave and enduring trauma on survivors, which is made immeasurably worse as this material proliferates on the internet and social media.

§ Social media platforms have significant impacts on expression, democratic participation, national security, and public safety. These platforms have tools to moderate harmful content. Mainstream social media platforms have voluntary content moderation systems that flag and test content against their community guidelines. But some platforms take decisive action in a largely ad-hoc fashion. These responses by social media companies tend to be reactive in nature and may not appropriately balance the wider public interest. Also, social media platforms are not required to preserve evidence of criminal content or notify law enforcement about criminal content, outside of mandatory reporting for child pornography offences. More proactive reporting could make it easier to hold perpetrators to account for harmful online activities.

The government stressed it is “is committed to confronting online harms while respecting freedom of expression, privacy protections, and the open exchange of ideas and debate online.”

Turning back to the discussion and technical papers the government made available, in the first the government contended:

New legislation would apply to ‘online communication service providers’.

The concept of online communication service provider is intended to capture major platforms, (e.g., Facebook, Instagram, Twitter, YouTube, TikTok, Pornhub), and exclude products and services that would not qualify as online communication services, such as fitness applications or travel review websites.

The legislation would not cover private communications, nor telecommunications service providers or certain technical operators. There would be specific exemptions for these services.

The legislation would also authorize the Government to include or exclude categories of online communication service providers from the application of the legislation within certain parameters.

The legislation would target five categories of harmful content:

· terrorist content;

· content that incites violence;

· hate speech;

· non-consensual sharing of intimate images; and

· child sexual exploitation content.

While all of the definitions would draw upon existing law, including current offences and definitions in the Criminal Code, they would be modified in order to tailor them to a regulatory – as opposed to criminal – context.

These categories were selected because they are the most egregious kinds of harmful content. The Government recognizes that there are other online harms that could also be examined and possibly addressed through future programming activities or legislative action.

The Liberal Party government continued:

In addition to the legislative amendments proposed under Bill C-36, further modifications to Canada’s existing legal framework to address harmful content online could include:

§ modernizing An act respecting the mandatory reporting of Internet child pornography by persons who provide an Internet service, (referred to as the Mandatory Reporting Act) to improve its effectiveness; and

§ amending the Canadian Security and Intelligence Service Act to streamline the process for obtaining judicial authority to acquire basic subscriber information of online threat actors.

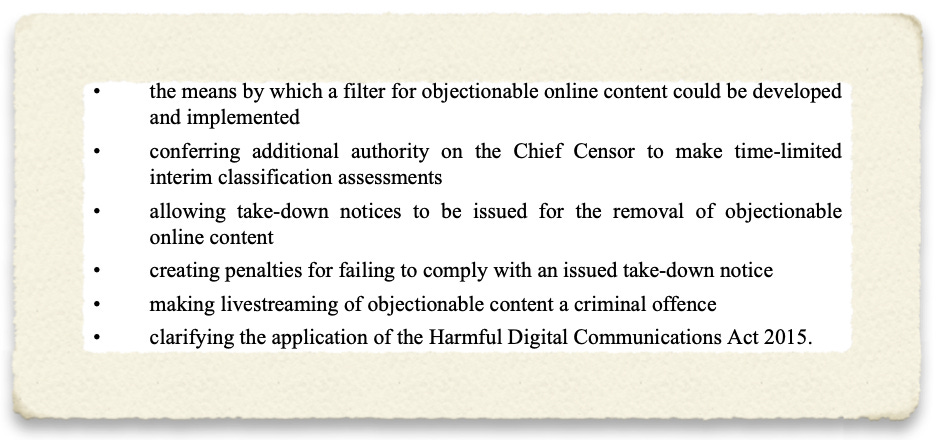

New Zealand is also in the midst of considering an online harms bill, the “Films, Videos, and Publications Classification (Urgent Interim Classification of Publications and Prevention of Online Harm) Amendment Bill.” This bill was driven by the livestreaming of the attack of a Christchurch mosque as explained by the bill’s new sponsor in February 2021:

This bill addresses specific legislative and regulatory gaps in our current online content regulation. These were highlighted in the tragic events of the Christchurch mosque attacks on 15 March 2019. The terrorist of the Christchurch attacks sought to exploit online platforms to promote his acts of hate-based violence. Following the original livestream broadcast, footage of the attacks spread across the internet through social media, and in the days that followed we saw thousands of links appear across our social media platforms. Unfortunately, some of these links were able to autoplay. Viewing this type of content can be extremely harmful and distressing, and I'm sure that members of this House, like me, know of people—particularly young children—who saw those links and viewed that content, and it was extremely harmful to them. I can only imagine how hard that must be to know that that content was available to the families of the victims and the survivors.

As mentioned, on 13 September, the Governance and Administration Committee published its final report on the bill with the recommendation that the bill be passed with proposed amendments. The committee stated:

The committee stated that the bill, as introduced, proposes:

The committee explained the changes it made to the bill, including the removal of the electronic filtering provisions, which the committee noted were opposed by most people and entities that commented on the bill:

Moreover, regarding the electronic filtering systems, the committee added:

The bill as introduced did not specify the design of an electronic filter or how exactly it would operate. The lack of detail about the filter’s design, scope, and operation was a significant concern for us and for submitters.

Finally, the report also includes a helpful blackline version of the proposed changes showing where and how the committee is advising the government to revise.

Other Developments

Photo by Simon Zhu on Unsplash

§ The Office of Management and Budget (OMB) and the Cybersecurity and Infrastructure Security Agency (CISA) “are seeking public feedback on strategic and technical guidance documents meant to move the U.S. government towards a zero trust architecture.” OMB and CISA issued these draft documents per Executive Order 14028“Improving the Nation's Cybersecurity”. The agencies stated:

o Read and comment on OMB’s Federal Zero Trust Strategy. The goal of this strategy is to accelerate agencies towards a shared baseline of early zero trust maturity.

o Read and comment on CISA’s Zero Trust Maturity Model. The maturity model complements OMB’s Federal Zero Trust Strategy, and is designed to provide agencies with a roadmap and resources to achieve an optimal zero trust environment.

o Read and comment on CISA’s Cloud Security Technical Reference Architecture, a guide for agencies to leverage when migrating to the cloud securely. The document explains considerations for shared services, cloud migration, and cloud security posture management.

§ Hong Kong’s Office of the Privacy Commissioner for Personal Data (PCPD) “issued the “Guidance on the Ethical Development and Use of Artificial Intelligence” (Guidance) to help organisations understand and comply with the relevant requirements of the Personal Data (Privacy) Ordinance (PDPO) when they develop or use AI.”The PCPD claimed:

o The Guidance recommends that organisations embrace three fundamental Data Stewardship Values when they develop and use AI, namely, being respectful, beneficial and fair to stakeholders. In line with international standards, the Guidance sets out the following seven ethical principles for AI :

§ Accountability – Organisations should be responsible for what they do and be able to provide sound justifications for their actions;

§ Human Oversight – Organisations should ensure that appropriate human oversight is in place for the operation of AI;

§ Transparency and Interpretability – Organisations should disclose their use of AI and relevant policies while striving to improve the interpretability of automated decisions and decisions made with the assistance of AI;

§ Data Privacy – Effective data governance should be put in place;

§ Fairness – Organisations should avoid bias and discrimination in the use of AI;

§ Beneficial AI – Organisations should use AI in a way that provides benefits and minimises harm to stakeholders; and

§ Reliability, Robustness and Security – Organisations should ensure that AI systems operate reliably, can handle errors and are protected against attacks.

o The Guidance also provides a set of practice guide, structured in accordance with general business processes, to assist organisations in managing their AI systems. The practice guide covers four main areas:

o Establish AI strategy and governance;

§ Conduct risk assessment and human oversight;

§ Execute development of AI models and management of overall AI Systems; and

§ Foster communication and engagement with stakeholders.

§ The National Institute of Standards and Technology (NIST) issued a whitepaper titled“DRAFT Baseline Security Criteria for Consumer IoT Devices” per Executive Order 14028“Improving the Nation's Cybersecurity”. NIST explained:

o Executive Order (EO) 14028, “Improving the Nation’s Cybersecurity,” tasks the National Institute of Standards and Technology (NIST), in coordination with the Federal Trade Commission (FTC) and other agencies, to initiate pilot programs informed by existing consumer product labeling programs to educate the public on the security capabilities of Internet-of-Things (IoT) devices and software development practices. NIST also is to consider ways to incentivize manufacturers and developers to participate in these programs. This white paper proposes baseline security criteria for consumer IoT devices. This is one of three dimensions of a consumer Internet of Things (IoT) cybersecurity labeling program that would be responsive to Sections 4 (s) and (t) of the EO. The other dimensions are criteria for conformity assessment and the label. In addition to the feedback sought on this white paper, NIST will also consult with stakeholders on those additional considerations.

o NIST will identify key elements of labeling programs in terms of minimum requirements and desirable attributes. Rather than establishing its own programs, NIST will specify desired outcomes, allowing providers and customers to choose the best solutions for their devices and environments. One size may not fit all, and multiple solutions might be offered by label providers.

§ A number of Florida Members of Congress, mostly Republicans, wrote Attorney General Merrick Garland about the Department of Justice’s announcement that a number of United States (U.S.) Attorneys Offices may have been compromised in the SolarWinds hack. They stated:

o The DOJ confirmed the breach affected 80 percent of Microsoft email accounts used by USAO employees in New York, but did not provide additional information on the extent of the hack or its effect on Florida USAOs or offices in other identified states. This announcement is alarming as USAO email servers contain highly sensitive information. Florida USAOs are responsible for the prosecution of some of the most significant federal crimes, including crimes related to drugs and trafficking. Additionally, according to recent media reports the DOJ launched an internal review of cybersecurity procedures in April 2021. The DOJ was reportedly conducting a 120-day review of interagency cybersecurity challenges. However, the DOJ did not formally announce this review and does not appear to have publically shared any information about the scope of this review. We are confident that the DOJ and other involved federal agencies are working tirelessly to prevent cyberattacks. However, the breach of USAO email servers is very concerning.

o Accordingly, we ask that you answer the following questions no later than Friday, October 1, 2021:

§ 1. What USAO information was compromised as a result of this hack?

§ 2. Was sensitive information, such as witness information, victim information, or information that relates to national security compromised? If so, please describe the types of information compromised.

§ 3. What steps have you taken to ensure the vulnerabilities that led to this intrusion have been remedied?

§ 4. During your confirmation hearing, you committed to a whole-of-government response to cyber threats. Please describe the current collaborative framework within the federal government for interagency coordination on cyber threats. Please describe the President’s strategy for a whole-of-government coordinated response to responding to and preventing cyberattacks, including which individual is leading this effort.

§ 5. In response to a question at your confirmation hearing, you said you would use the full power of the DOJ to combat cyber threats. You also said that you would fully support the President and his National Security team’s efforts on cybersecurity. What have been the efforts of the President related to cybersecurity that you have supported since you were confirmed?

§ 6. In June, you announced you were doubling the number of attorneys working on voting rights at the DOJ. Given the significant threat to America posed by cyberattacks, how much have you increased the number of attorneys or other staff working on cybersecurity issues?

§ The Securities and Exchange Commission (SEC) “filed an action against BitConnect, an online crypto lending platform, its founder Satish Kumbhani, and its top U.S. promoter and his affiliated company, alleging that they defrauded retail investors out of $2 billion through a global fraudulent and unregistered offering of investments into a program involving digital assets.”The SEC asserted:

o According to the SEC's complaint, filed in the United States District Court for the Southern District of New York, from early 2017 through January 2018, Defendants conducted a fraudulent and unregistered offering and sale of securities in the form of investments in a "Lending Program" offered by BitConnect. The complaint alleges that, to induce investors to deposit funds into the purported Lending Program, Defendants falsely represented, among other things, that BitConnect would deploy its purportedly proprietary "volatility software trading bot" that, using investors' deposits, would generate exorbitantly high returns. However, the SEC alleges that instead of deploying investor funds for trading with the purported trading bot, defendants BitConnect and Kumbhani siphoned investors' funds off for their own benefit by transferring those funds to digital wallet addresses controlled by them, their top promoter in the U.S., defendant Glenn Arcaro, and others. The SEC's complaint further alleges that BitConnect and Kumbhani established a network of promoters around the world, and rewarded them for their promotional efforts and outreach by paying commissions, a substantial portion of which they concealed from investors. According to the complaint, among these promoters was Arcaro, the lead national promoter of BitConnect for the United States who used the website he created, Future Money, to lure investors into the Lending Program.

§ Switzerland’s Federal Data Protection and Information Commissioner (FDPIC) published guidance titled “The transfer of personal data to a country with an inadequate level of data protection based on recognised standard contractual clauses and model contracts.” The FDPIC stated:

o …the FDPIC recognises the standard contractual clauses for the transfer of personal data to third countries in accordance with Regulation (EU) 2016/679 of the European Parliament and of the Council (pursuant to Implementing Decision 2021/914/EU) as the basis for personal data transfers to a country without an adequate level of data protection, provided that the necessary adaptations and amendments are made for use under Swiss data protection law.

§ The United States (U.S.) Patent and Trademark Office (PTO) prevailed in U.S. court in its determination that an artificial intelligence machine cannot be an inventor under U.S. law. The court summed up its finding:

§ Minnesota’s Commerce Department announced that “Minnesota consumers have new protections to protect the privacy of data they provide to insurance companies, including personal and financial information, through a new law championed by the Minnesota Department of Commerce.” The Department further claimed:

o In recent years, there have been several major data breaches involving large insurers that have exposed and compromised the sensitive personal information of millions of insurance consumers. The NAIC Data Security Model Act, passed by the 2021 Minnesota Legislature, adopts a model insurance law proposed by the National Association of Insurance Commissioners (NAIC). The NAIC model law has been enacted by 18 states, including Minnesota. The U.S. Treasury has urged states to adopt the NAIC model law or the administration plans to ask Congress to preempt states to take action.

o “This new law serves as a guide for Minnesota insurance businesses on how to prepare for, and react to, a data incident,” said Commerce Commissioner Grace Arnold. “Being prepared, prioritizing consumer privacy and increasing public disclosure will better protect all Minnesotans.”

o The new law applies to insurers, insurance agents, and other insurance-related entities licensed by the Department of Commerce and asks them to do three things:

§ To create a plan on how to deal with cybersecurity events.

§ To work this plan and investigate cybersecurity events if they think one has occurred.

§ To notify the Department of Commerce and to notify consumers when a cybersecurity event has occurred.

o These three requirements will increase the likelihood that companies are prepared for the internal and external data threats they face and help consumers take immediate steps to protect themselves when their private data is exposed.

o Protecting the privacy of consumer data has been a priority for Commerce and the NAIC. Commerce continues to work with NAIC committees on additional policy ideas for consumer privacy protection.

o States that, as of Aug. 3, had adopted the NAIC Data Security Model Act are: Alabama, Connecticut, Delaware, Indiana, Iowa, Hawaii, Louisiana, Maine, Michigan, Minnesota, Mississippi, New Hampshire, North Dakota, Ohio, South Carolina, Tennessee, Virginia and Wisconsin.

§ The United Kingdom’s Office for Science and Council for Science and Technology published a report titled “Harnessing technology for the long-term sustainability of the UK’s healthcare system.” The entities claimed:

o Changing environments and lifestyles, an ageing population and an increased need for managing chronic and multiple long-term conditions in the UK will challenge our health system and increase healthcare costs. Often those people most in need of healthcare (such as older, rural and socially deprived populations) have the most difficulty accessing service delivery centres. In some cases, there is also a variation in quality and quantity of healthcare provision. Such healthcare inequalities are driving disparities in health outcomes within and between regions.

o There are challenges within the system itself. Structural silos exist at several levels; within the medical field there is a division into specialisms, within the National Health Service (NHS) there is an administrative and financial division into trusts, worsened by a lack of interoperability, and across the wider system there is a separation of health from social care and public health services. These structures reduce the ease with which information from one part of the system can be shared with other parts, can complicate a patient’s journey through the system, and may waste time and resources. Taken together, these barriers to flow of information affect the quality and integration of care. Furthermore, the composition and distribution of the healthcare workforce is changing, with difficulties such as staff shortages serving to compound the strain on service delivery.

o The COVID-19 pandemic has further exposed the limitations of the current system, highlighting health inequalities, the challenges to integrated health and social care and the shortcomings in our approach to public and population health. It has also led to further pressures on the system, with a backlog of treatment requirements that may take considerable time to clear.

o As currently structured, spending on the NHS would need to increase to maintain long term sustainability of the service. Under the current system, a continued drive for health service efficiency and cost reductions could further reduce resilience to emerging crises and further increase inequalities. As the government’s white paper on health and social care recognises, a change in approach is needed.

§ The National Institute of Standards and Technology (NIST) announced that “Draft NISTIR 8286B, Prioritizing Cybersecurity Risk for Enterprise Risk Management, is now available for public comment.” NIST stated:

o This report continues an in-depth discussion of the concepts introduced in NISTIR 8286, Integrating Cybersecurity and Enterprise Risk Management (ERM), with a focus on the use of enterprise objectives to prioritize, optimize, and respond to cybersecurity risks.

o The NISTIR 8286 series of documents is intended to help organizations better implement cybersecurity risk management (CSRM) as an integral part of ERM – both taking its direction from ERM and informing it. The increasing frequency, creativity, and severity of cybersecurity attacks mean that all enterprises should ensure that cybersecurity risk is receiving appropriate attention within their ERM programs and that the CSRM program is anchored within the context of ERM.

o This publication draws upon processes and templates described in NISTIR 8286A, Identifying and Estimating Cybersecurity Risk for Enterprise Risk Management (ERM), and on feedback received on public comment drafts of that report. Draft NISTIR 8286B extends the use of stakeholders’ risk appetite and risk tolerance statements to define risk expectations. It further describes the use of the risk register and risk detail report templates to communicate and coordinate activity.

o Since enterprise resources are nearly always limited, and must also fund other enterprise risks, it is vital that CSRM work at all levels be coordinated and prioritized to maximize effectiveness and to ensure that the most critical needs are adequately addressed. Risk prioritization, risk response, and risk aggregation should be aggregated and optimized to help guide enterprise risk communication and decision-making. Through effective prioritization and response, based on accurate risk analysis in light of business objectives, managers throughout the enterprise will be able to navigate a changing risk landscape and take advantage of innovation opportunities.

o A third companion document, NISTIR 8286C, which will detail processes for enterprise-level aggregation and oversight of cybersecurity risks, is being developed and will be available for review and comment in the coming months.

Further Reading

§ “Sensitive government data could be another casualty of Afghan pullout” By Joseph Marks — The Washington Post. Among the many long-term costs of the rapid fall of the Afghan government and the swift withdrawal of U.S. diplomatic and military personnel, count this one: Troves of sensitive U.S. government data are surely being left behind in the nation now under Taliban control. The vast majority of classified information that lived on U.S. embassy computers was almost certainly flown out of Afghanistan or destroyed. A lot of government's highly sensitive data is also housed in computer clouds rather than on hard drives and protected with multiple security controls. But reams of unclassified but sensitive material will probably remain in the country, both in digital forms and on paper.

§ “‘Vaccine passports’ to combine jab records with QR check-ins for more freedoms” By David Crowe — The Age. Millions of vaccinated Australians will be able to use their mobile phones to gain exemptions to lockdown rules at cafes, restaurants and public events under a national cabinet plan to use digital records to verify vaccine status. A federal vaccine record will be combined with state check-in systems to expand the use of QR codes at public venues to be sure those who gain entry have been immunised against COVID-19.

§ “Amazon to proactively remove more content that violates rules from cloud service -sources” By Sheila Dang — Reuters. Amazon.com Inc plans to take a more proactive approach to determine what types of content violate its cloud service policies, such as rules against promoting violence, and enforce its removal, according to two sources, a move likely to renew debate about how much power tech companies should have to restrict free speech. Over the coming months, Amazon will hire a small group of people in its Amazon Web Services (AWS) division to develop expertise and work with outside researchers to monitor for future threats, one of the sources familiar with the matter said.

§ “Amazon denies reports that it will proactively moderate content on its hosting service” By Russell Brandom — The Verge. Amazon is planning to expand its in-house moderation team for Amazon Web Services, according to a report published on Thursday by Reuters. Citing two sources, the report says Amazon is planning to use the new workforce to proactively remove more prohibited content from AWS before it’s reported by users. Reached for comment on Thursday, Amazon said it did not plan to pre-review content before it is posted on the platform, but declined to confirm or deny specifics. On Friday, however, Amazon followed up with a more strongly worded statement directly contesting that the team’s methodology would change.

§ “How Facebook Undermines Privacy Protections for Its 2 Billion WhatsApp Users” By Craig Silverman — Pro Publica. Clarification, Sept. 8, 2021:A previous version of this story caused unintended confusion about the extent to which WhatsApp examines its users’ messages and whether it breaks the encryption that keeps the exchanges secret. We’ve altered language in the story to make clear that the company examines only messages from threads that have been reported by users as possibly abusive. It does not break end-to-end encryption. When Mark Zuckerberg unveiled a new “privacy-focused vision” for Facebook in March 2019, he cited the company’s global messaging service, WhatsApp, as a model. Acknowledging that “we don’t currently have a strong reputation for building privacy protective services,” the Facebook CEO wrote that “I believe the future of communication will increasingly shift to private, encrypted services where people can be confident what they say to each other stays secure and their messages and content won’t stick around forever. This is the future I hope we will help bring about. We plan to build this the way we’ve developed WhatsApp.”

§ “New Zealand internet outage blamed on DDoS attack on nation's third largest internet provider” By Tim Richardson — The Register. Parts of New Zealand were cut off from the digital world today after a major local ISP was hit by an aggressive DDoS attack. Vocus – the country's third-largest internet operator which is behind brands including Orcon, Slingshot and Stuff Fibre – confirmed the cyberattack originated at one of its customers. According to a network status update, the company said: "This afternoon a Vocus customer was under DDoS attack... A DDoS mitigation rule was updated to our Arbor DDoS platform to block the attack for the end customer."

§ “China’s New Data Security Law Will Provide It Early Notice Of Exploitable Zero Days” By Brad D. Williams — Breaking Defense. China’s new Data Security Law, which takes effect today, includes cyber vulnerability disclosure provisions that will provide its government with nearly exclusive early access to a steady stream of zero-day vulnerabilities — potentially to include those discovered in technologies used by the Defense Department and Intelligence Community. Armed with that information, experts fear, China could exploit cyber vulnerabilities in tech used broadly across the US public and private sectors.

§ “Ransomware’s next target: Schools” By Laurens Cerulus — Politico EU. Cybercriminals, like anxious parents, are also waiting for schools to reopen. As children prepare for the new academic year, schools are following hospitals, energy firms and food makers as the next prime target for gangs of hackers. Gangs using ransomware — often operating from Russia — target low-tech sectors like health care, utilities and manufacturing services, which increasingly rely on digital tools but often lag in investing in cybersecurity to protect their systems. It makes for low-effort, high-reward targets for ransomware criminals.

§ “Tesla must deliver Autopilot crash data to federal auto safety watchdog by October 22” By Lora Kolodny — CNBC. The National Highway Traffic and Safety Administration has added a 12th crash into the scope of its investigation into Tesla’s Autopilot system, and is demanding that the company provide an exhaustive amount of data about its driver assistance systems by Oct. 22.

§ “FBI says Chinese authorities are hacking US-based Uyghurs” By Carly Page — Tech Crunch. The FBI has warned that the Chinese government is using both in-person and digital techniques to intimidate, silence and harass U.S.-based Uyghur Muslims. The Chinese government has long been accused of human rights abuses over its treatment of the Uyghur population and other mostly Muslim ethnic groups in China’s Xinjiang region. More than a million Uyghurs have been detained in internment camps, according to a United Nations human rights committee, and many other Uyghurs have been targeted and hacked by state-backed cyberattacks. China has repeatedly denied the claims.

§ “NSO Group Affiliate Circles Sold Equipment to Uzbekistan ‘Secret Police’” By Scott Stedman — Forensic News. Shipping records reveal that a lesser-known affiliate of the hack-for-hire company NSO Group supplied equipment in 2020 to Uzbekistan’s national intelligence agency (SGB), often referred to as a “secret police” force with a record of brutality and oppression. NSO Group creates hacking tools that governments around the world have purchased to ostensibly monitor terrorists and other criminals. However, the tools have often been used by autocratic regimes to spy on journalists and dissidents.

§ “West lacks clear plan to rival China’s ‘Belt and Road,’ Estonia says” By Laurens Cerulus — Politico EU. Europe, the U.S. and their allies need a better, single infrastructure investment project to rival China's Belt and Road Initiative, according to Estonian Prime Minister Kaja Kallas. "We have many initiatives that actually tackle the same issue," Kallas told POLITICO in an interview, singling out the Blue Dot Network initiative by the U.S., Australia and Japan, and the Three Seas Initiative that covers Eastern Europe and has the support of the EU and U.S. "We need to connect them all ... This is what I feel is lacking," Kallas said.

§ “Report details how Airbus pilots saved the day when all three flight computers failed on landing” By Richard Speed — The Register. Airbus is to implement a software update for its A330 aircraft following an incident in 2020 where all three primary flight computers failed during landing. The result was a loss of thrust reversers and autobrake systems and the pilots having to use manual braking to bring the aircraft, a China Airlines A330-302, to a halt just 30 feet before the end of the runway. The incident happened at Taipei Songshan Airport on 14 June 2020. The flight, CI202 from Shanghai with 87 passengers and nine cabin crew members, had been uneventful. The landing, however, was anything but.

§ “Pro-China social media campaign hits new countries, blames U.S. for COVID” By Joseph Menn — Reuters. A misinformation campaign on social media in support of Chinese government interests has expanded to new languages and platforms, and it even tried to get people to show up to protests in the United States, researchers said on Wednesday. Experts at security company FireEye (FEYE.O) and Alphabet’s (GOOGL.O) Google said the operation was identified in 2019 as running hundreds of accounts in English and Chinese aimed at discrediting the Hong Kong democracy movement. The effort has broadened its mission and spread from Twitter (TWTR.N), Facebook(FB.O) and Google to thousands of handles on dozens of sites around the world.

Coming Events

Photo by Edwin Andrade on Unsplash

§ 14 September

o The European Data Protection Board (EDPB) will hold a plenary meeting.

o The United Kingdom’s House of Commons’ Digital, Culture, Media and Sport Committee will have a hearing in its “Influencer culture” inquiry.

o The National Institute of Standards and Technology (NIST) will hold a virtual public workshop “on challenges and practical approaches to initiating cybersecurity labeling efforts for Internet of Things (IoT) devices and consumer software.” NIST added:

§ The workshop will help NIST to carry out an Executive Order (EO) on Improving the Nation’s Cybersecurity. The agenda for the workshop will include facilitated panel discussions and presentations based on consumer software labeling position papers submitted to NIST and on preliminary feedback on potential IoT baseline security criteria that was shared by NIST in August.

§ According to the EO, by February 6, 2022, in coordination with the Federal Trade Commission (FTC) and other agencies, NIST is required to:

§ identify IoT cybersecurity criteria for a consumer labeling program and

§ identify secure software development practices or criteria for a consumer software labeling program.

§ 15 September

o The Federal Trade Commission (FTC) will hold an open meeting with the following tentative agenda:

§ Proposed Policy Statement on Privacy Breaches by Health Apps and Connected Devices: The Commission will vote on whether to issue a policy statement on the importance of protecting the public from privacy breaches by health apps and other connected devices.

§ Non-HSR Reported Acquisitions by Select Technology Platforms, 2010-2019: An FTC Study: Staff will present some findings from the Commission’s inquiry into large technology platforms’ unreported acquisitions, including an analysis of the structure of deals that customarily fly under enforcers’ radar. The public release of the report is subject to commission vote.

§ Proposed Revisions to FTC Procedural Rules Concerning Petitions for Rulemaking: The Commission will vote on putting in place a process to receive public input on rulemaking petitions by external stakeholders.

§ Proposed Withdrawal of 2020 Vertical Merger Guidelines: The Commission will vote on whether to rescind the Vertical Merger Guidelines adopted in June 2020 and the Commentary on Vertical Merger Enforcement issued in December 2020.

o The National Institute of Standards and Technology (NIST) will hold a virtual public workshop “on challenges and practical approaches to initiating cybersecurity labeling efforts for Internet of Things (IoT) devices and consumer software.” NIST added:

§ The workshop will help NIST to carry out an Executive Order (EO) on Improving the Nation’s Cybersecurity. The agenda for the workshop will include facilitated panel discussions and presentations based on consumer software labeling position papers submitted to NIST and on preliminary feedback on potential IoT baseline security criteria that was shared by NIST in August.

§ According to the EO, by February 6, 2022, in coordination with the Federal Trade Commission (FTC) and other agencies, NIST is required to:

§ identify IoT cybersecurity criteria for a consumer labeling program and

§ identify secure software development practices or criteria for a consumer software labeling program.

§ 23 September

o The United Kingdom’s Joint Select Committee will hold a hearing on the government’s draft “Online Safety Bill.”

§ 28 September

o The Information Security and Privacy Advisory Board (ISPAB) will hold an open meeting and “The agenda is expected to include the following items:

§ —Board Discussion on Executive Order 14028, Improving the Nation's Cybersecurity (May 12, 2021) deliverables and impacts to date,

§ —Presentation by NIST, the Department of Homeland Security, and the General Services Administration on upcoming work specified in Executive Order 14028,

§ —Presentation by the Office of Management and Budget on Executive Order 14028 directions and memoranda to U.S. Federal Agencies,

§ —Board Discussion on recommendations and issues related to Executive Order 14028.

§ 30 September

o The Federal Communications Commission (FCC) will hold an open meeting with this tentative agenda:

§ Promoting More Resilient Networks. The Commission will consider a Notice of Proposed Rulemaking to examine the Wireless Network Resiliency Cooperative Framework, the FCC’s network outage reporting rules, and strategies to address the effect of power outages on communications networks. (PS Docket Nos. 21-346, 15-80; ET Docket No. 04-35)

§ Reassessing 4.9 GHz Band for Public Safety. The Commission will consider an Order on Reconsideration that would vacate the 2020 Sixth Report and Order, which adopted a state-by-state leasing framework for the 4.9 GHz (4940-4900 MHz) band. The Commission also will consider an Eighth Further Notice of Proposed Rulemaking that would seek comment on a nationwide framework for the 4.9 GHz band, ways to foster greater public safety use, and ways to facilitate compatible non-public safety access to the band. (WP Docket No. 07-100)

§ Authorizing 6 GHz Band Automated Frequency Coordination Systems. The Commission will consider a Public Notice beginning the process for authorizing Automated Frequency Coordination Systems to govern the operation of standard-power devices in the 6 GHz band (5.925-7.125 GHz). (ET Docket No. 21-352)

§ Spectrum Requirements for the Internet of Things. The Commission will consider a Notice of Inquiry seeking comment on current and future spectrum needs to enable better connectivity relating to the Internet of Things (IoT). (ET Docket No. 21-353)

§ Shielding 911 Call Centers from Robocalls. The Commission will consider a Further Notice of Proposed Rulemaking to update the Commission's rules regarding the implementation of the Public Safety Answering Point (PSAP) Do-Not-Call registry in order to protect PSAPs from unwanted robocalls. (CG Docket No. 12-129; PS Docket No. 21-343)

§ Stopping Illegal Robocalls From Entering American Phone Networks. The Commission will consider a Further Notice of Proposed Rulemaking that proposes to impose obligations on gateway providers to help stop illegal robocalls originating abroad from reaching U.S. consumers and businesses. (CG Docket No. 17-59; WC Docket No. 17-97)

§ Supporting Broadband for Tribal Libraries Through E-Rate. The Commission will consider a Notice of Proposed Rulemaking that proposes to update sections 54.500 and 54.501(b)(1) of the Commission’s rules to amend the definition of library and to clarify Tribal libraries are eligible for support through the E-Rate Program. (CC Docket No. 02-6)

§ Strengthening Security Review of Companies with Foreign Ownership. The Commission will consider a Second Report and Order that would adopt Standard Questions – a baseline set of national security and law enforcement questions – that certain applicants with reportable foreign ownership must provide to the Executive Branch prior to or at the same time they file their applications with the Commission, thus expediting the Executive Branch’s review for national security and law enforcement concerns. (IB Docket No. 16-155)