FTC Issues Resolutions To Ease Compulsory Process In A Number of Investigations

Committee approves new ICO head; New CFPB head moves closer to vote; UN GGE issues final report

Photo by Parker Johnson on Unsplash

The Federal Trade Commission (FTC) held two meetings last week at which the three Democratic Commissioners continued to lay the groundwork for aggressive future action against a number of large companies and the technology field.

At the closed meeting last week, the FTC voted 3-2 “to approve and make public a series of resolutions that will enable agency staff to efficiently and expeditiously investigate conduct in core FTC priority areas over the next ten years.”

The FTC’s Bureau of Consumer Protection and Bureau of Competition had sent a joint recommendation to the Commissioners to “authorize eight new compulsory process resolutions in these essential areas:

(1) Acts or Practices Affecting United States Armed Forces Service Members and Veterans

(2) Acts or Practices Affecting Children

(3) Bias in Algorithms and Biometrics

(4) Deceptive and Manipulative Conduct on the Internet

(5) Repair Restrictions

(6) Abuse of Intellectual Property

(7) Common Directors and Officers and Common Ownership

(8) Monopolization Offenses

In practical terms, the resolutions allow FTC staff to go to one Commissioner and get them to sign subpoenas and civil investigative demands in any of the above areas, some of which are quite broad. Consequently, the matter would not need to come before the whole Commission, allowing for more streamlined and expedited investigations.

The majority framed these resolutions as a means to expedite staff investigations across the eight areas while the minority claimed the agency lacked the authority to take this step. Moreover, some of these issues are considered more pressing than others depending on whether one consults FTC Chair Lina Khan and Commissioners Rohit Chopra and Rebecca Kelly Slaughter or if one goes to Commissioners Noah Joshua Phillips and Christine S. Wilson. To the latter two Commissioners, the FTC is engaged in overreach that will ultimately hurt consumers for the innovation and dynamic nature of the U.S. technology markets would be harmed, ultimately offering people in the U.S. worse products at higher prices. Khan, Chopra, and Slaughter have disagreed with this position broadly and see the FTC as the agency with a central, active role to play in ensuring people and businesses are not injured by the unfair, deceptive, and anti-competitive practices that are widespread in American markets today.

In this vein, FTC Chair Lina Khan and Commissioner Rebecca Kelly Slaughter explained what they see as the rationale for the omnibus resolutions:

Khan and Slaughter argued these omnibus resolutions were necessary given the declining funding for the agency (when measured against the rate of inflation):

Nonetheless, it is not clear how the acquisition of more information and data on these investigations would address the staff and funding constraints the agency faces.

Phillips and Wilson voted against the omnibus resolutions and issued a dissent in which they claimed:

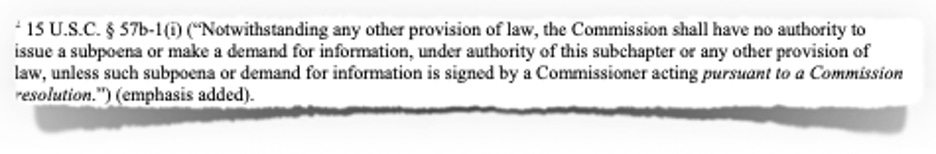

Being the curious sort, I looked at Footnote 2 that supports Phillips and Wilson’s claim Congress gave the entire Commission, but not a Commissioner or staff, the right to “bless compulsory process in investigations:”

Phillips and Wilson have gone to the FTC Act and quoted the relevant section. And yet, the statute clearly states that per an FTC passed resolution a sole Commissioner may sign subpoenas and demands for information, giving them legal force. Perhaps their argument is that the omnibus resolutions somehow violate the spirit of the FTC Act, but this is not a colorable argument in a few different ways.

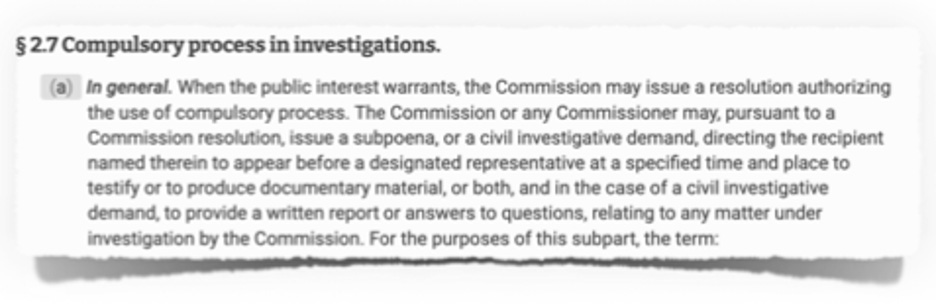

When one turns to the FTC’s regulations on inquiries, investigations, and compulsory processes, one finds that Commissioners, acting alone and pursuant to a resolution, may issue a subpoena or civil investigative demand:

So, Congress clearly gave this power to the FTC, and the agency’s regulations also provide for a single Commissioner to sign subpoenas and civil investigative demands pursuant to an FTC passed resolution.

Additionally, this is not new as Khan and Slaughter pointed out in their statement that “[o]mnibus resolutions have long been a mainstay of the agency’s investigative arsenal…[and] [p]rior to my joining the Commission, the agency had 56 omnibuses already in place—resolutions that frequently garnered bipartisan support, including from my dissenting colleagues.” Indeed, as one example, the FTC passed an omnibus resolution in 1999 (see here and here for majority and minority statements).

I hesitate to assign motive to Phillips and Wilson, but, unless I am missing something (always a possibility), they appear to be trying to throw sand in the gears, a respectable move from any loyal opposition anywhere. Nevertheless, their objections seem very, very thin as the majority appears to be on firm legal ground.

Other Developments

Photo by Joe Leahy on Unsplash

§ The United Kingdom’s (UK) Digital, Culture, Media and Sport Committee approved the appointment of New Zealand’s Privacy Commissioner to be the next UK Information Commissioner.

§ Federal Trade Commission (FTC) Commissioner Rohit Chopra’s nomination to serve as the next Director of the Consumer Financial Protection Bureau (CFPB) moved closer to a final vote in the Senate. Democrats succeeded in a motion to discharge his nomination from the Senate Banking, Housing, and Urban Affairs Committee by a 49-48 vote. Earlier this month, law school professor and privacy champion Alvaro Bedoya was nominated to be an FTC Commissioner.

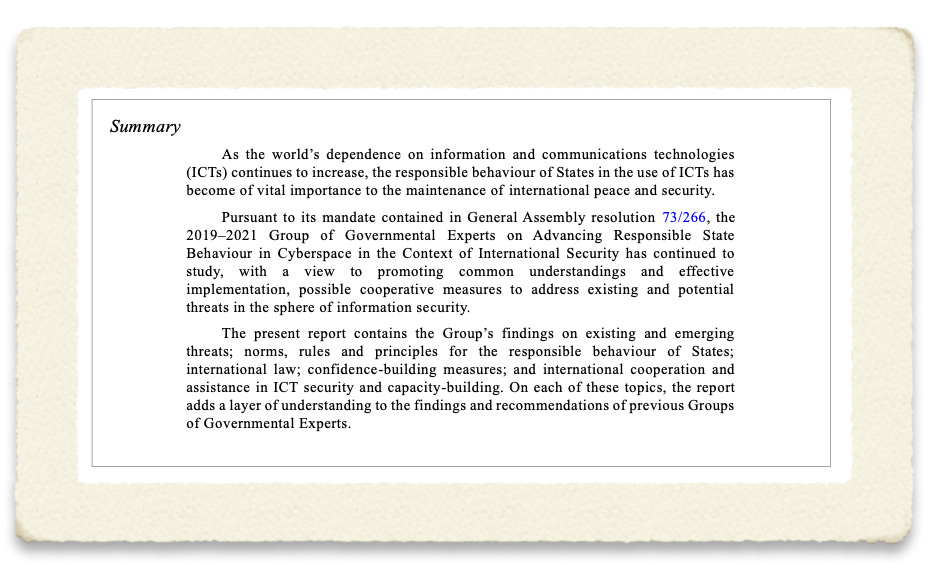

§ The United Nations (UN) Group of Governmental Experts (GGE) on Advancing responsible State behaviour in cyberspace in the context of international security issued its report and the “Official compendium of voluntary national contributions on the subject of how international law applies to the use of information and communications technologies by States submitted by participating governmental experts.” The GGE offered this summary:

§ In furtherance of its responsibilities to implement the “California Privacy Rights Act” (CPRA), the California Privacy Protection Agency is asking for “input from stakeholders in developing regulations” on these topic areas:

o Processing that Presents a Significant Risk to Consumers’ Privacy or Security: Cybersecurity Audits and Risk Assessments Performed by Businesses

o Automated Decisionmaking

o Audits Performed by the Agency

o Consumers’ Right to Delete, Right to Correct, and Right to Know

o Consumers’ Rights to Opt-Out of the Selling or Sharing of Their Personal Information and to Limit the Use and Disclosure of their Sensitive Personal Information

o Consumers’ Rights to Limit the Use and Disclosure of Sensitive Personal Information

o Information to Be Provided in Response to a Consumer Request to Know (Specific Pieces of Information)

o Definitions and Categories

§ Two Australian agencies have commenced with activities to implement the nation’s new “Online Safety Act 2021.”

o The Department of Infrastructure, Transport, Regional Development and Communications have made available two documents and asked for input:

§ Draft Online Safety (Basic Online Safety Expectations) Determination 2021—consultation paper

§ Draft Online Safety (Basic Online Safety Expectations) Determination 2021

o The eSafety Commissioner asked for input on these:

§ Developing “an implementation roadmap for a mandatory age verification (AV) regime relating to online pornography”

§ A “new Restricted Access System to limit the exposure of children and young people under 18 to some age-inappropriate online material”

§ New Zealand’s Privacy Commissioner “issued a compliance notice to the Reserve Bank of New Zealand, triggered by a cyber-attack in December 2020.” The Privacy Commissioner stated:

o This is the first time the Privacy Commissioner has issued a compliance notice since receiving these new powers in the Privacy Act 2020.

o As part of the investigation into the breach the Bank engaged KPMG to undertake an independent review of its systems and processes. The review revealed multiple areas of non-compliance with Privacy Principle 5.

o The compliance notice issued today provides a template for the Bank to report on to the Privacy Commissioner, confirming the improvements to their policies and procedures aimed to make the systems more secure.

§ United Nations (UN) High Commissioner for Human Rights Michelle Bachelet “stressed the urgent need for a moratorium on the sale and use of artificial intelligence (AI) systems that pose a serious risk to human rights until adequate safeguards are put in place…[and] also called for AI applications that cannot be used in compliance with international human rights law to be banned.” Bachelet’s comments were made in conjunction with the release of “a report that analyses how AI – including profiling, automated decision-making and other machine-learning technologies – affects people’s right to privacy and other rights, including the rights to health, education, freedom of movement, freedom of peaceful assembly and association, and freedom of expression.” Bachelet made these recommendations:

o The High Commissioner recommends that States:

§ (a) Fully recognize the need to protect and reinforce all human rights in the development, use and governance of AI as a central objective, and ensure equal respect for and enforcement of all human rights online and offline;

§ (b) Ensure that the use of AI is in compliance with all human rights and that any interference with the right to privacy and other human rights through the use of AI is provided for by law, pursues a legitimate aim, complies with the principles of necessity and proportionality and does not impair the essence of the rights in question;

§ (c) Expressly ban AI applications that cannot be operated in compliance with international human rights law and impose moratoriums on the sale and use of AI systems that carry a high risk for the enjoyment of human rights, unless and until adequate safeguards to protect human rights are in place;

§ (d) Impose a moratorium on the use of remote biometric recognition technologies in public spaces, at least until the authorities responsible can demonstrate compliance with privacy and data protection standards and the absence of significant accuracy issues and discriminatory impacts, and until all the recommendations set out in A/HRC/44/24, paragraph 53 (j) (i–v), are implemented;

§ (e) Adopt and effectively enforce, through independent, impartial authorities, data privacy legislation for the public and private sectors as an essential prerequisite for the protection of the right to privacy in the context of AI;

§ (f) Adopt legislative and regulatory frameworks that adequately prevent and mitigate the multifaceted adverse human rights impacts linked to the use of AI by the public and private sectors;

§ (g) Ensure that victims of human rights violations and abuses linked to the use of AI systems have access to effective remedies;

§ (h) Require adequate explainability of all AI-supported decisions that can significantly affect human rights, particularly in the public sector;

§ (i) Enhance efforts to combat discrimination linked to the use of AI systems by States and business enterprises, including by conducting, requiring and supporting systematic assessments and monitoring of the outputs of AI systems and the impacts of their deployment;

§ (j) Ensure that public-private partnerships in the provision and use of AI technologies are transparent and subject to independent human rights oversight, and do not result in abdication of government accountability for human rights.

o The High Commissioner recommends that States and business enterprises:

§ (a) Systematically conduct human rights due diligence throughout the life cycle of the AI systems they design, develop, deploy, sell, obtain or operate. A key element of their human rights due diligence should be regular, comprehensive human rights impact assessments;

§ (b) Dramatically increase the transparency of their use of AI, including by adequately informing the public and affected individuals and enabling independent and external auditing of automated systems. The more likely and serious the potential or actual human rights impacts linked to the use of AI are, the more transparency is needed;

§ (c) Ensure participation of all relevant stakeholders in decisions on the development, deployment and use of AI, in particular affected individuals and groups;

§ (d) Advance the explainability of AI-based decisions, including by funding and conducting research towards that goal.

o The High Commissioner recommends that business enterprises:

§ (a) Make all efforts to meet their responsibility to respect all human rights, including through the full operationalization of the Guiding Principles on Business and Human Rights;

§ (b) Enhance their efforts to combat discrimination linked to their development, sale or operation of AI systems, including by conducting systematic assessments and monitoring of the outputs of AI systems and of the impacts of their deployment;

§ (c) Take decisive steps in order to ensure the diversity of the workforce responsible for the development of AI;

§ (d) Provide for or cooperate in remediation through legitimate processes where they have caused or contributed to adverse human rights impacts, including through effective operational-level grievance mechanisms.

§ The United States (U.S.) Federal Acquisition Security Council (FASC) published a “final rule to implement the requirements of the laws that govern the operation of the FASC, the sharing of supply chain risk information, and the exercise of the FASC's authorities to recommend issuance of removal and exclusion orders to address supply chain security risks.” FASC continued:

o The Federal Acquisition Supply Chain Security Act of 2018 (FASCSA or Act) (Title II of Pub. L. 115-390), signed into law on December 21, 2018, established the Federal Acquisition Security Council (FASC). The FASC is an executive branch interagency council chaired by a senior-level official from the Office of Management and Budget. It includes representatives from the General Services Administration; Department of Homeland Security (DHS); Office of the Director of National Intelligence (ODNI); Department of Justice; Department of Defense (DOD); and Department of Commerce. The FASC is authorized to perform a variety of functions, including making recommendations for orders that would require the removal of covered articles from executive agency information systems or the exclusion of sources or covered articles from executive agency procurement actions.

o Pursuant to subsection 202(d) of the FASCSA, the FASC is required to prescribe first an interim final rule and then a final rule to implement subchapter III of chapter 13 of title 41, U.S. Code. The FASC published the interim final rule (interim rule) at 85 FR 54263 on September 1, 2020. The interim rule invited interested persons to submit comments on or before November 2, 2020. Six entities submitted comments. The final rule reflects changes made based upon some of those comments, as well as feedback received from internal Federal stakeholders. The final rule also corrects certain structural issues introduced by the interim rule, as explained in more detail in section III. This final rule retains the organization and much of the content of the interim rule. It contains three subparts. Subpart A explains the scope of the rule, provides definitions for relevant terms, and establishes the membership of the FASC. Subpart B establishes the role of the FASC's information sharing agency (ISA). DHS, acting primarily through the Cybersecurity and Infrastructure Security Agency, will serve as the ISA. The ISA standardizes processes and procedures for submission and dissemination of supply chain information and facilitates the operations of a Supply Chain Risk Management (SCRM) Task Force under the FASC. This FASC Task Force consists of of designated technical experts who assist the FASC in implementing its information sharing, risk analysis, and risk assessment functions. Subpart B also prescribes mandatory and voluntary information sharing criteria and associated information protection requirements.

§ The United Kingdom’s House of Lords completed the second reading of the “Police, Crime, Sentencing and Courts Bill” (HL Bill 40), legislation that would give UK police expanded powers to search digital devices during a criminal investigation. According to an explanatory note:

o With so much more of individuals’ lives being lived online, important information for the prevention, detection, investigation or prosecution of crime is now held on digital devices, such as mobile phones. This includes information from complainants and witnesses in criminal proceedings. The arrangements by which such information is made available to law enforcement agencies, prosecutors and the defence are critical to confidence in the criminal justice system and to meeting the right to a fair trial. Extraction of information from digital devices has therefore become a more frequent and routine part of criminal investigations.

o In June 2020, the Information Commissioner’s Office published a report on police practice in England and Wales around the extraction and analysis of data from mobile phones and other electronic communication devices of victims, witnesses and suspects during a criminal investigation. The report identified inconsistencies in the approach taken by police forces to extract digital data and the complex legal framework that governs this practice. It recommended clarifying the lawful basis for data extraction and introducing a code of practice to guide this activity in order to increase consistency and ensure that any data taken is strictly necessary for the purpose of the investigation.

o Chapter 3 of Part 2 introduces a specific legal basis for the extraction of information from complainants’, witnesses’ and others’ digital devices. This will be a non-coercive power based on the agreement of the routine user of the device. It will be applicable to specified law enforcement and regulatory agencies, such as the police, who extract information to support investigations or to protect vulnerable people from harm. This will provide a nationally consistent legal basis for the purpose of preventing, detecting, investigating or prosecuting criminal offences and for safeguarding and preventing serious harm.

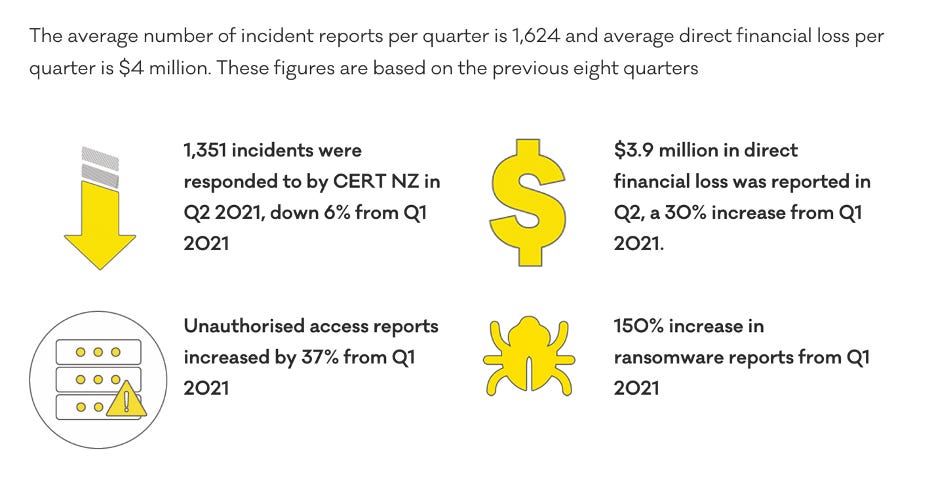

§ CERT NZ issued its Quarter Two (Q2) Report and offered this summary:

Further Reading

Photo by King's Church International on Unsplash

§ “Police In At Least 24 Countries Have Used Clearview AI. Find Out Which Ones Here.” By Ryan Mac, Caroline Haskins, and Antonio Pequeno IV — BuzzFeed News. Law enforcement agencies and government organizations from 24 countries outside the United States used a controversial facial recognition technology called Clearview AI, according to internal company data reviewed by BuzzFeed News. That data, which runs up until February 2020, shows that police departments, prosecutors’ offices, universities, and interior ministries from around the world ran nearly 14,000 searches with Clearview AI’s software. At many law enforcement agencies from Canada to Finland, officers used the software without their higher-ups’ knowledge or permission. After receiving questions from BuzzFeed News, some organizations admitted that the technology had been used without leadership oversight.

§ “Spies for Hire: China’s New Breed of Hackers Blends Espionage and Entrepreneurship” By Paul Mozur and Chris Buckley — The New York Times. China’s buzzy high-tech companies don’t usually recruit Cambodian speakers, so the job ads for three well-paid positions with those language skills stood out. The ad, seeking writers of research reports, was placed by an internet security start-up in China’s tropical island-province of Hainan. That start-up was more than it seemed, according to American law enforcement. Hainan Xiandun Technology was part of a web of front companies controlled by China’s secretive state security ministry, according to a federal indictment from May. They hacked computers from the United States to Cambodia to Saudi Arabia, seeking sensitive government data as well as less-obvious spy stuff, like details of a New Jersey company’s fire-suppression system, according to prosecutors.

§ “Chinese Police Kept Buying Cellebrite Phone Crackers After Company Said It Ended Sales” By Mara Hvistendahl — The Intercept. In its bid to go public next week, Israeli cellphone hacking company Cellebrite has tried to present itself as a defender of global human rights, highlighting its withdrawal from Bangladesh, Belarus, China, Hong Kong, Russia, and Venezuela. In a presentation to investors filed with the U.S. Securities and Exchange Commission earlier this month, the company claimed that its mission was to “protect and save lives, accelerate justice and preserve privacy in global communities.”

§ “The Curious Omission in Russia’s New Security Strategy” By David Shedd and Ivana Stradner — Defense One. After spending most of 2021 unleashing cyberattacks on a range of Western nations, Russia recently released its new National Security Strategy, or NSS, a consequential document in which the word “cyber” is conspicuously absent. The omission is not a matter of translation—it’s strategic. It is high time U.S. policymakers began to understand what Russia’s curious word choice reveals about its cyber schemes. Russia’s goals for digital conflict are much broader than shutting down pipelines and stealing data. Kremlin officials also want to influence the minds and ultimately the behavior of their adversaries. Instead of the term “cyber security,” (кибербезопасность) the NSS speaks of “information security.” (информационная безопасность) This may seem like a semantic difference, but it is intentional and consequential in the language of the Kremlin.

§ “Israel’s Spy Agency Snubbed the U.S. Can Trust Be Restored?” By Julian E. Barnes, Ronen Bergman and Adam Goldman — The New York Times. The cable sent this year by the outgoing C.I.A. officer in charge of building spy networks in Iran reverberated throughout the intelligence agency’s Langley headquarters, officials say: America’s network of informers had largely been lost to Tehran’s brutally efficient counterintelligence operations, which has stymied efforts to rebuild it. Israel has helped fill the breach, officials say, its robust operations in Iran providing the United States with streams of reliable intelligence on Iran’s nuclear activities, missile programs and on its support for militias around the region.

§ “China seeks to expand influence in Africa with more digital projects” By Sarah Zheng — The South China Morning Post. China said it would step up digital cooperation and investments in Africa, as Beijing seeks to deepen its influence on the continent alongside its pledges for trade, infrastructure and Covid-19 vaccines. At a time when the US is also seeking to reinvigorate its trade and investment with Africa, assistant foreign minister Deng Li told a virtual forum on Tuesday that China would boost its partnership with African nations in areas such as the digital economy, smart cities and 5G networks.

§ “Justice Dept. Is Said to Accelerate Google Advertising Inquiry” By Cecilia Kang — The New York Times. The Department of Justice has accelerated an investigation into Google’s digital advertising practices and may file an antitrust lawsuit against the internet giant before the end of the year, two people with knowledge of the government’s thinking said on Wednesday. The investigation focuses on Google’s power in the digital ad market, looking at how the company uses its dominance in auctions and ad technology to maintain its power, said the people, who were not authorized to speak publicly. In recent weeks, the Justice Department has called in more third parties as witnesses and asked for documents and interviews, in a sign it has picked up the investigation’s pace, the people said.

§ “Tech companies face pressure over January 6th subpoenas” By Russell Brandom — The Verge. As Congress pushes for more details of the January 6th attack on the Capitol, tech companies have found themselves caught between a new request from the select committee investigating the attack and ominous threats from Republicans hoping to stall the committee’s investigation. Pushing for new details about communications between Republican members of Congress and President Trump during the attack, the House select committee sent data requests on Monday ordering the preservation of phone records and other communications related to the January 6th attack. Requests went out to 35 companies, including Facebook, Twitter, Google, and Microsoft. Wireless providers like AT&T, T-Mobile, and Verizon Wireless also received the request.

§ “Google appeals $591M French fine in copyright payment spat” — The Associated Press. Google is appealing a 500 million euro ($591 million) fine issued by French regulators over its handling of negotiations with publishers in a dispute over copyright. The dispute is part of a larger battle by authorities in Europe and elsewhere to force Google and other tech companies to compensate publishers for content. “We disagree with a number of legal elements, and believe that the fine is disproportionate to our efforts to reach an agreement and comply with the new law,” Google France Vice President Sebastien Missoffe said in a press statement.

§ “Machines can read your brain. There’s little that can stop them.” By Melissa Heikkila — Politico EU. In 2019, Rafael Yuste successfully implanted images directly into the brains of mice and controlled their behavior. Now, the neuroscientist warns that there is little that can prevent humans from being next. If used responsibly, neurotechnology — in which machines interact directly with human neurons — can be used to understand and cure stubborn illnesses like Alzheimer's and Parkinson's disease, and assist with the development of prosthetic limbs and speech therapy. But if left unregulated, neurotechnology could also lead to the worst corporate and state excesses, including discriminatory policing and privacy violations, leaving our minds as vulnerable to surveillance as our communications.

§ “The Secret Bias Hidden in Mortgage-Approval Algorithms” By Emmanuel Martinez and Lauren Kirchner — The Markup. The new four-bedroom house in Charlotte, N.C., was Crystal Marie and Eskias McDaniels’s personal American dream, the reason they had moved to this Southern town from pricey Los Angeles a few years ago. A lush, long lawn, 2,700 square feet of living space, a neighborhood pool and playground for their son, Nazret. All for $375,000. Prequalifying for the mortgage was a breeze. They said they had saved much more than they would need for the down payment, had very good credit—scores of 805 and 725—and earned roughly six figures each, she in marketing at a utility company and Eskias representing a pharmaceutical company. The monthly mortgage payment was less than they’d paid for rent in Los Angeles for years.

§ “Apple hit with antitrust case in India over in-app payments issues” By Aditya Kalra — Reuters. Apple Inc is facing an antitrust challenge in India for allegedly abusing its dominant position in the apps market by forcing developers to use its proprietary in-app purchase system, according to a source and documents seen by Reuters. The allegations are similar to a case Apple faces in the European Union, where regulators last year started an investigation into Apple's imposition of an in-app fee of 30% for distribution of paid digital content and other restrictions.

§ “Amazon denies reports that it will proactively moderate content on its hosting service” By Russell Brandom — The Verge. Amazon is planning to expand its in-house moderation team for Amazon Web Services, according to a report published on Thursday by Reuters. Citing two sources, the report says Amazon is planning to use the new workforce to proactively remove more prohibited content from AWS before it’s reported by users. Reached for comment on Thursday, Amazon said it did not plan to pre-review content before it is posted on the platform, but declined to confirm or deny specifics. On Friday, however, Amazon followed up with a more strongly worded statement directly contesting that the team’s methodology would change.

§ “Trial & Error in Kuwait” By Sean Lyngaas — Mohammed Aldoub stared at his computer. It was late March 2019, and Aldoub had begun his day as he often does, by scouring the internet for security issues from his house in Kuwait City — the sweltering, palm-tree-lined capital of Kuwait — when he came upon a file on VirusTotal, a platform that researchers use to analyze malicious code.

§ “European lawmakers welcome South Korean action on Apple, Google app stores, promise more regulatory efforts” By Reis Thebault — Washington Post. South Korea’s passage of a bill to restrict how Apple and Google can operate their app stores was welcomed in the European Union, home to some of the world’s most ambitious attempts to regulate Big Tech companies. But European legislators also said they favored a broader set of regulations. Marcel Kolaja, a vice president of the European Parliament and a former software engineer, said Wednesday that the South Korean legislation, which would prohibit Apple and Google from requiring that developers use their in-app payment systems, is a sign that the tech giants’ app store dominance is “being addressed globally, which is absolutely needed.”

Coming Events

Photo by Mitchell Orr on Unsplash

§ 22 September

o The House Homeland Security Committee will hold a hearing titled “Threats to the Homeland: Evaluating the Landscape 20 Years After 9/11” with these witnesses:

§ Secretary of Homeland Security Alejandro N. Mayorkas

§ Federal Bureau of Investigation Director Christopher A. Wray

§ National Counterterrorism Center Director Christine Abizaid

§ 23 September

o The United Kingdom’s Joint Select Committee will hold a hearing on the government’s draft “Online Safety Bill.”

o The Senate Homeland Security and Governmental Affairs will hold a hearing titled “National Cybersecurity Strategy: Protection of Federal and Critical Infrastructure Systems,” with these witnesses:

§ National Cyber Director Chris Inglis

§ Cybersecurity and Infrastructure Security Agency Director Jen Easterly

§ Federal Chief Information Security Officer Christopher DeRusha

o The House Judiciary Committee’s Antitrust, Commercial, and Administrative Law Subcommittee will hold a hearing titled “Reviving Competition, Part 4: 21st Century Antitrust Reforms and the American Worker.”

§ 24 September

o The California Privacy Protection Agency Board will be holding a meeting.

§ 28 September

o The Information Security and Privacy Advisory Board (ISPAB) will hold an open meeting and “The agenda is expected to include the following items:

§ —Board Discussion on Executive Order 14028, Improving the Nation's Cybersecurity (May 12, 2021) deliverables and impacts to date,

§ —Presentation by NIST, the Department of Homeland Security, and the General Services Administration on upcoming work specified in Executive Order 14028,

§ —Presentation by the Office of Management and Budget on Executive Order 14028 directions and memoranda to U.S. Federal Agencies,

§ —Board Discussion on recommendations and issues related to Executive Order 14028.

§ 29 September

o The White House announced a meeting of the U.S.-EU Trade and Technology Council (TTC), a body created in June 2021, established “to expand and deepen trade and transatlantic investment ties and update the rules of the road for the 21st century economy.”

§ 30 September

o The Federal Communications Commission (FCC) will hold an open meeting with this tentative agenda:

§ Promoting More Resilient Networks. The Commission will consider a Notice of Proposed Rulemaking to examine the Wireless Network Resiliency Cooperative Framework, the FCC’s network outage reporting rules, and strategies to address the effect of power outages on communications networks. (PS Docket Nos. 21-346, 15-80; ET Docket No. 04-35)

§ Reassessing 4.9 GHz Band for Public Safety. The Commission will consider an Order on Reconsideration that would vacate the 2020 Sixth Report and Order, which adopted a state-by-state leasing framework for the 4.9 GHz (4940-4900 MHz) band. The Commission also will consider an Eighth Further Notice of Proposed Rulemaking that would seek comment on a nationwide framework for the 4.9 GHz band, ways to foster greater public safety use, and ways to facilitate compatible non-public safety access to the band. (WP Docket No. 07-100)

§ Authorizing 6 GHz Band Automated Frequency Coordination Systems. The Commission will consider a Public Notice beginning the process for authorizing Automated Frequency Coordination Systems to govern the operation of standard-power devices in the 6 GHz band (5.925-7.125 GHz). (ET Docket No. 21-352)

§ Spectrum Requirements for the Internet of Things. The Commission will consider a Notice of Inquiry seeking comment on current and future spectrum needs to enable better connectivity relating to the Internet of Things (IoT). (ET Docket No. 21-353)

§ Shielding 911 Call Centers from Robocalls. The Commission will consider a Further Notice of Proposed Rulemaking to update the Commission's rules regarding the implementation of the Public Safety Answering Point (PSAP) Do-Not-Call registry in order to protect PSAPs from unwanted robocalls. (CG Docket No. 12-129; PS Docket No. 21-343)

§ Stopping Illegal Robocalls From Entering American Phone Networks. The Commission will consider a Further Notice of Proposed Rulemaking that proposes to impose obligations on gateway providers to help stop illegal robocalls originating abroad from reaching U.S. consumers and businesses. (CG Docket No. 17-59; WC Docket No. 17-97)

§ Supporting Broadband for Tribal Libraries Through E-Rate. The Commission will consider a Notice of Proposed Rulemaking that proposes to update sections 54.500 and 54.501(b)(1) of the Commission’s rules to amend the definition of library and to clarify Tribal libraries are eligible for support through the E-Rate Program. (CC Docket No. 02-6)

§ Strengthening Security Review of Companies with Foreign Ownership. The Commission will consider a Second Report and Order that would adopt Standard Questions – a baseline set of national security and law enforcement questions – that certain applicants with reportable foreign ownership must provide to the Executive Branch prior to or at the same time they file their applications with the Commission, thus expediting the Executive Branch’s review for national security and law enforcement concerns. (IB Docket No. 16-155)